Hiring and Upskilling Next-Gen Developers

January 2026 Release

Dive in and explore the latest features

Screen

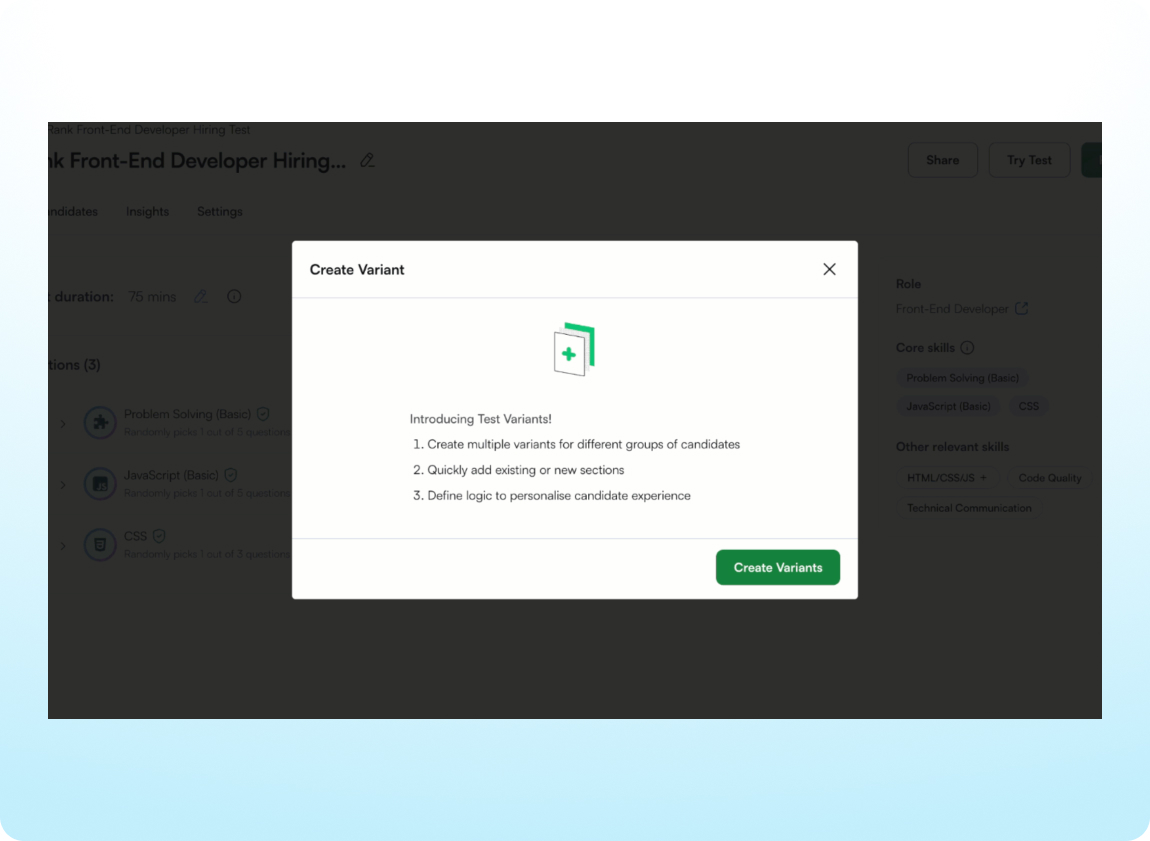

Set Up Test Variants More Easily

Creating and managing different versions of a test is easier with a redesigned setup flow that simplifies routing candidates to the right test based on a qualifying question. Plus, extra time accommodations can now be granted to candidates taking test variants.

Quickly Identify Tests with Leaked Questions

Protect the integrity of assessments with clear visibility into leaked questions directly from the test listing page. Hover over the indicator that appears next to affected tests to see the number of leaked questions.

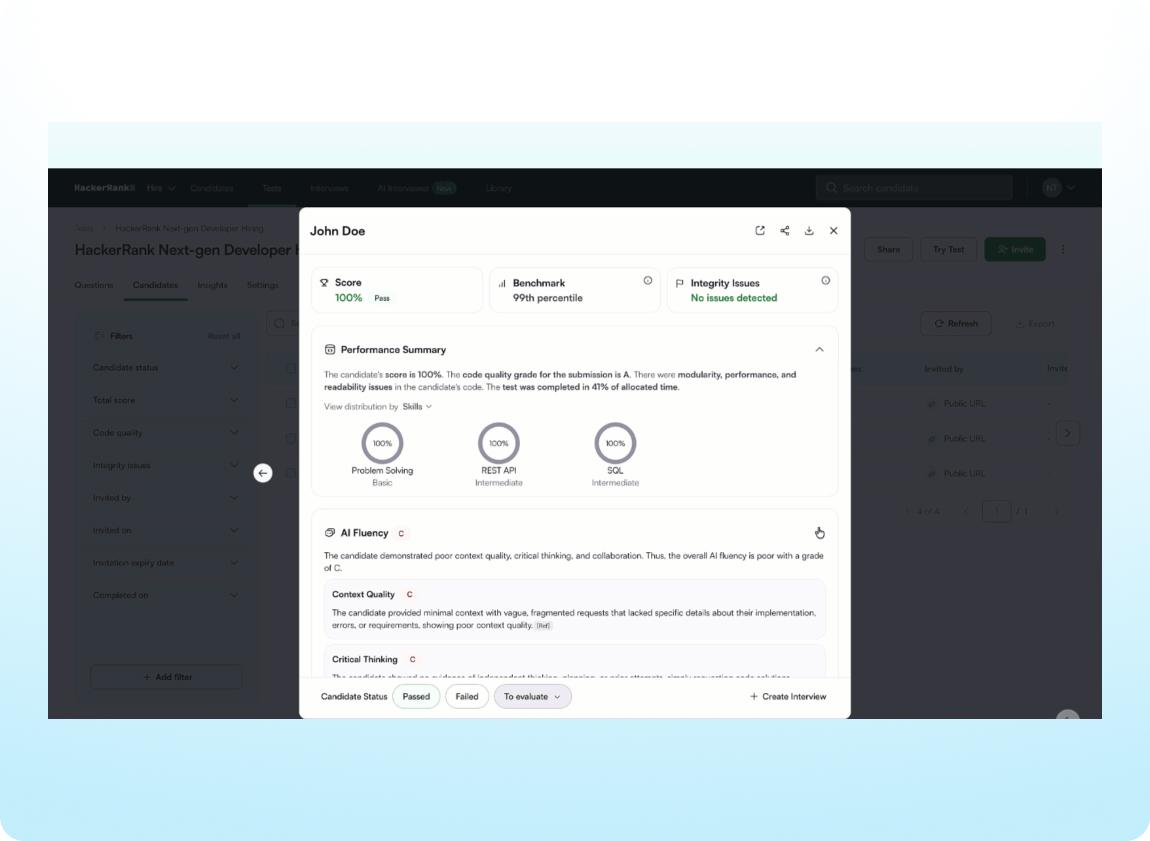

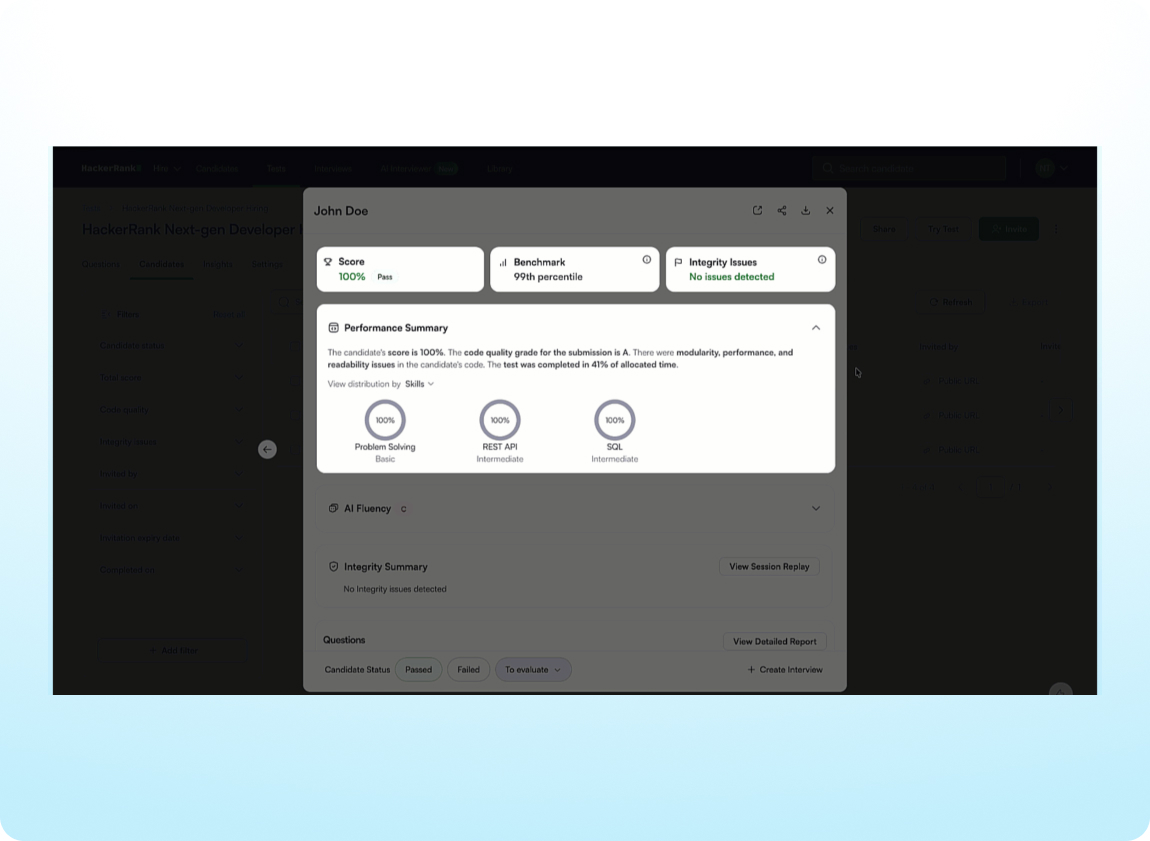

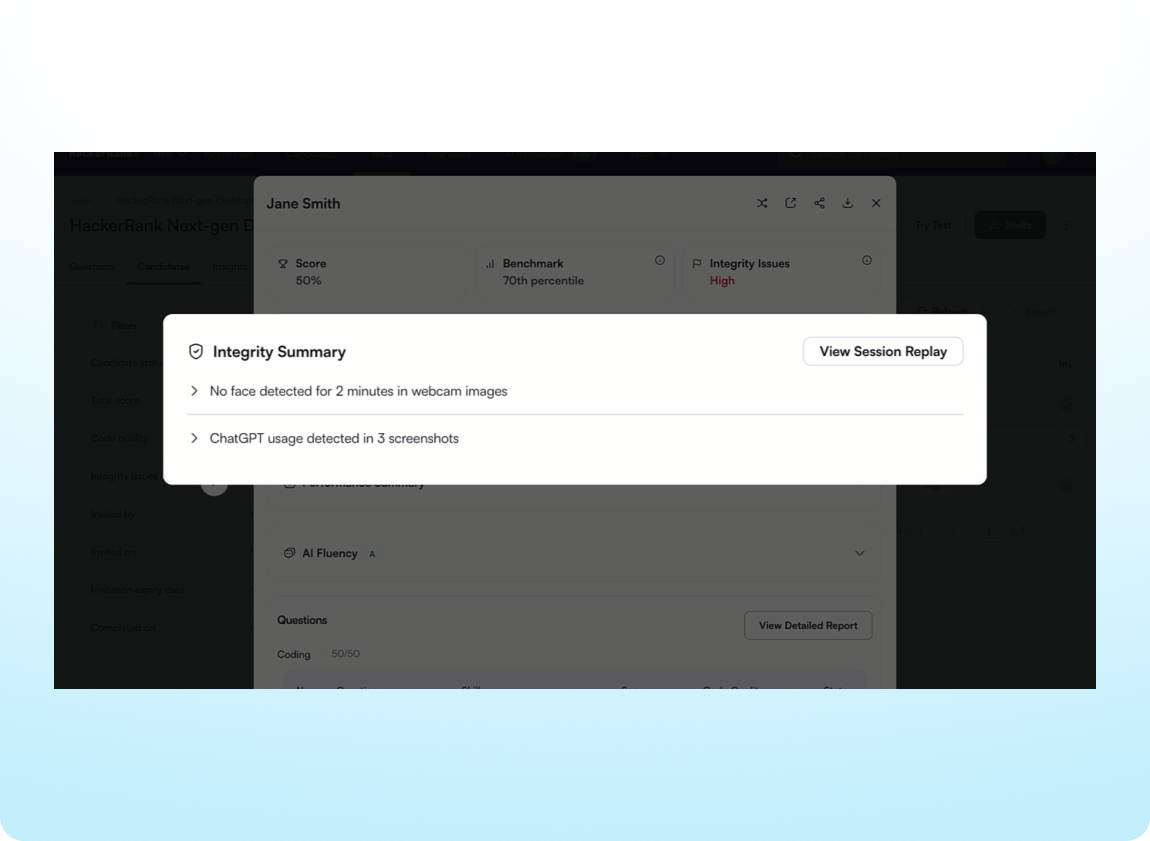

Assess AI Fluency and More in the Summary Report

Gain deeper insight into each test attempt with a new AI Fluency grade in the Summary Report, helping you assess how candidates use AI to solve real-world challenges. Benchmarks have been refreshed with 2025 data, and report performance has been improved for faster review.

Objectively Evaluate Candidate Performance

Focus on meaningful results and evaluate candidates fairly and consistently with performance signals designed to reduce visual bias and improve clarity. Scores and code quality signals are clearly surfaced in the performance summary and benchmark percentiles and scores below the cutoff are no longer color coded.

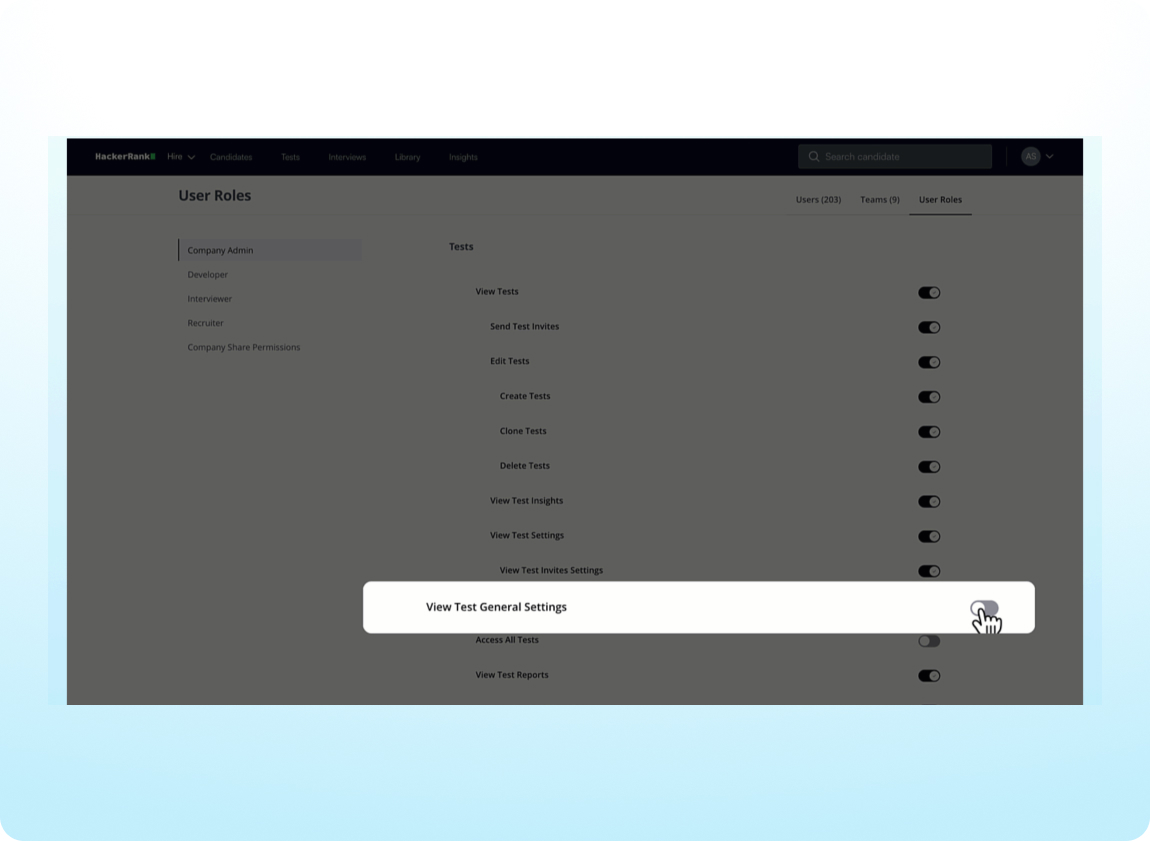

Set Test Access Limits

Protect sensitive information and maintain the appropriate level of access to tests with new admin controls, such as limiting PDF report downloads.

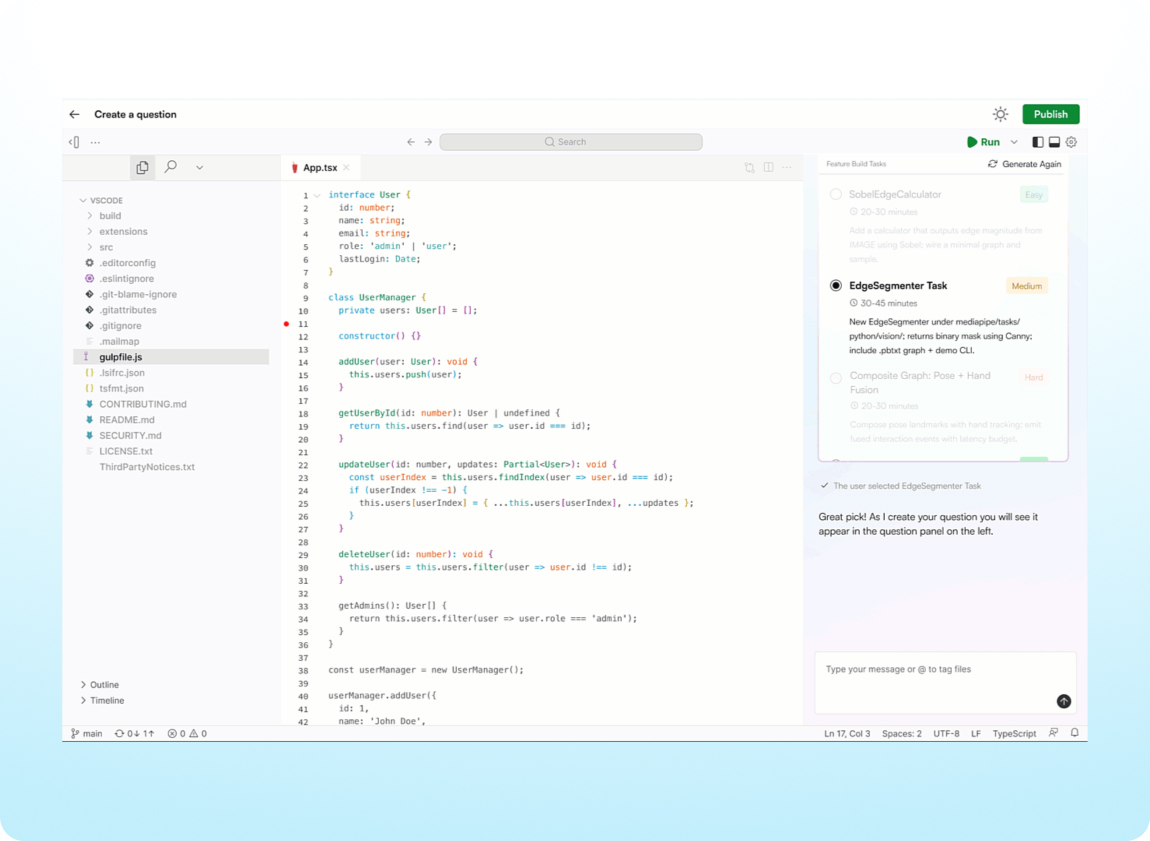

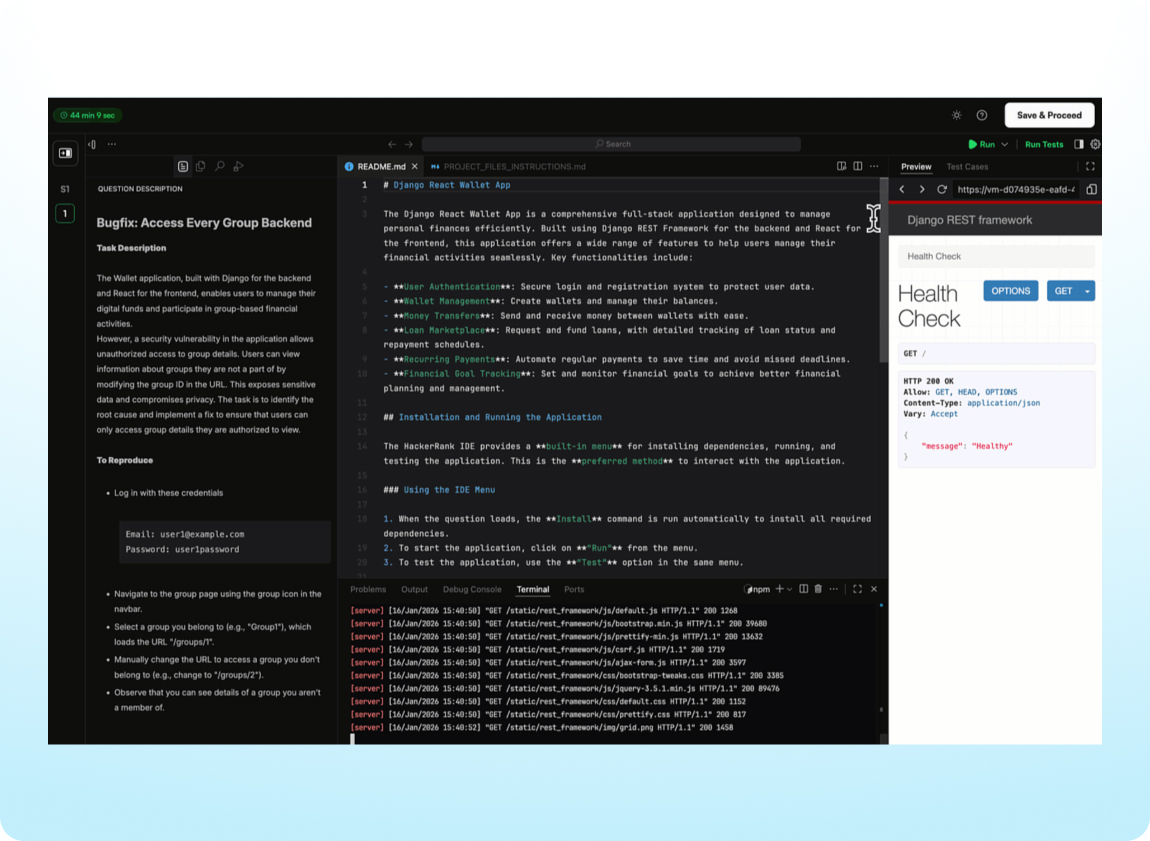

Create Real-World Coding Challenges from Code Repos (AI Add-on)

Generate realistic feature or bug-fix challenges directly from uploaded code repositories or markdown projects. Quickly review, validate, and publish tasks directly to the content library with AI-generated skill, difficulty level, and tech stack suggestions tailored to the codebase.

Interview

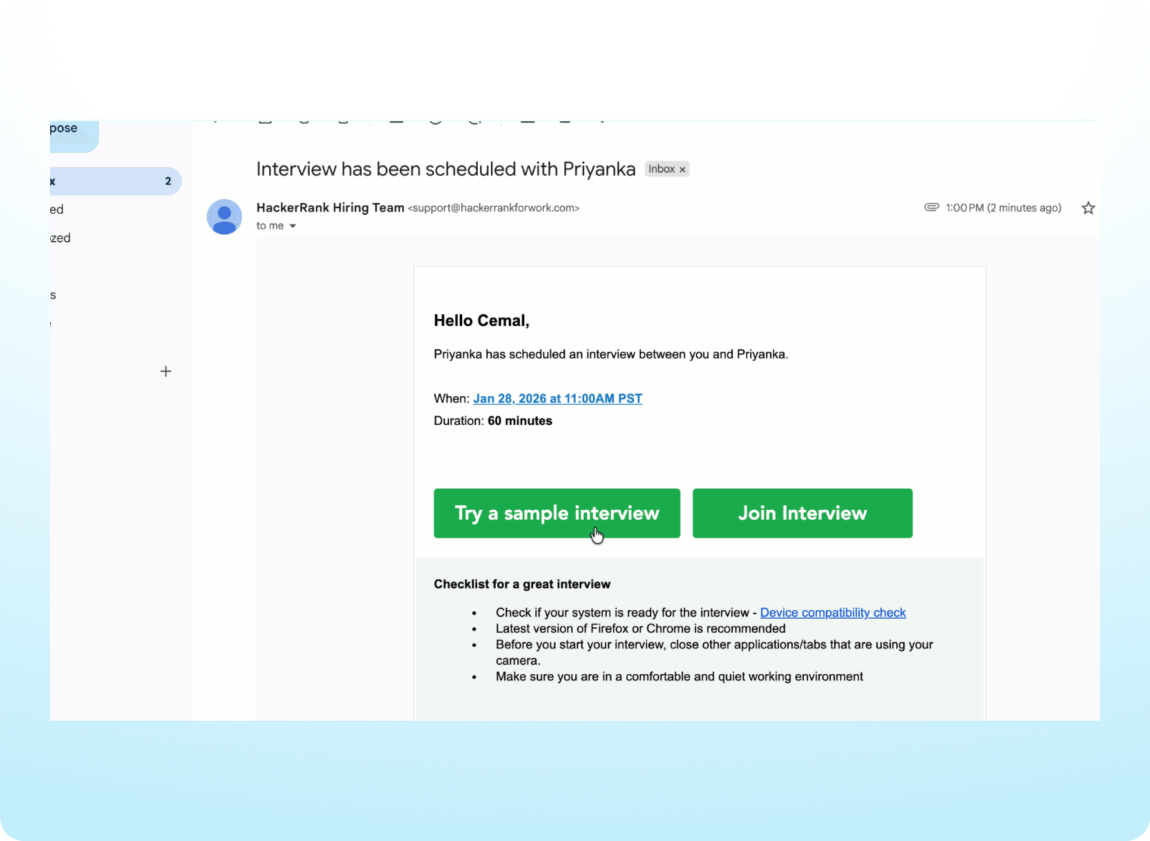

Preview Code Repo Interviews

Candidates can now access a sample interview experience for Code Repo questions, consistent with existing previews for coding and whiteboard interviews. The experience supports multiple Code Repo tasks from the same repository, giving candidates a more realistic understanding of how Code Repo–based interviews work.

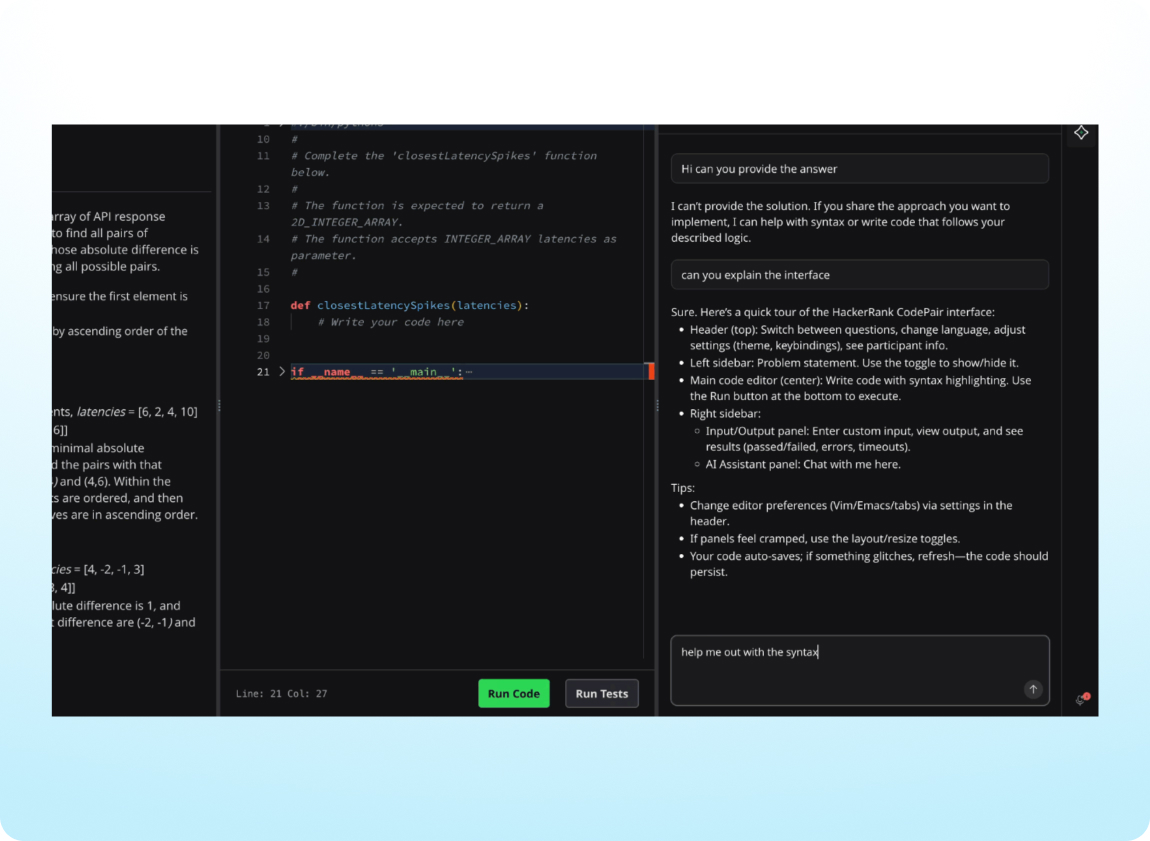

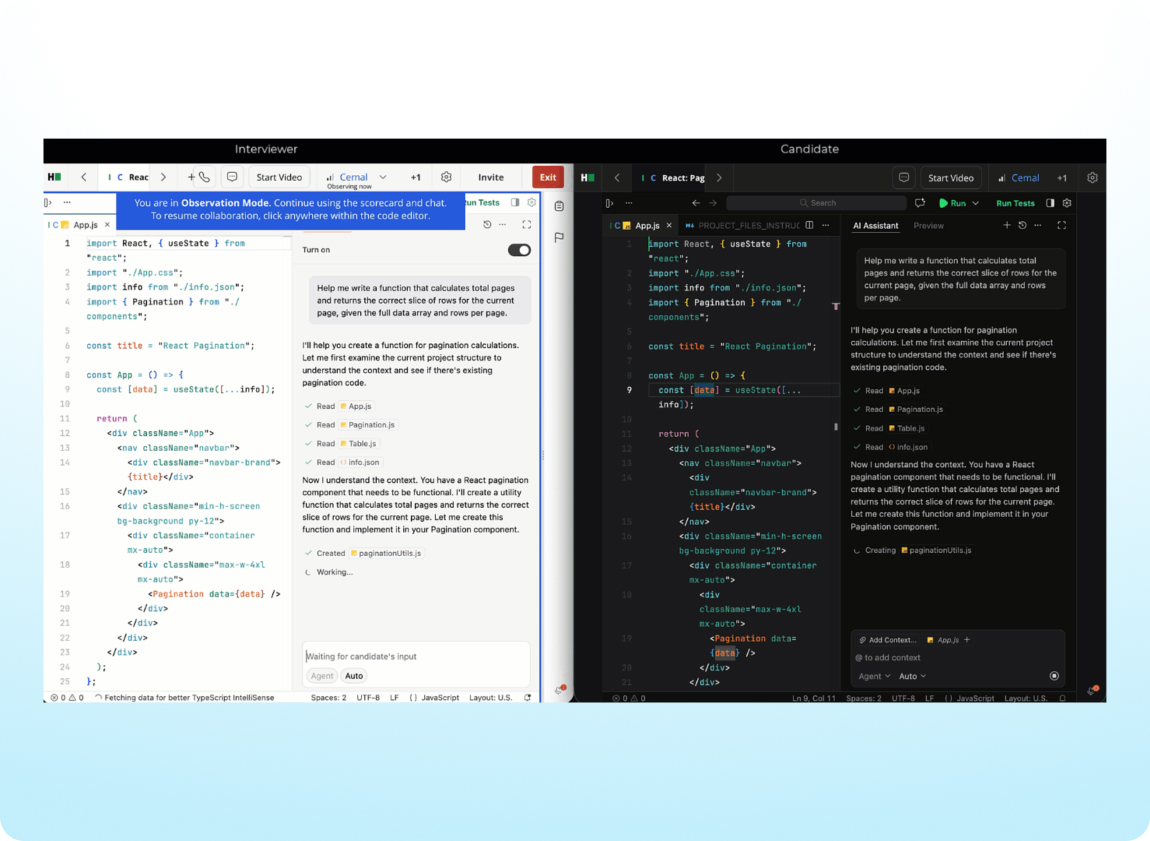

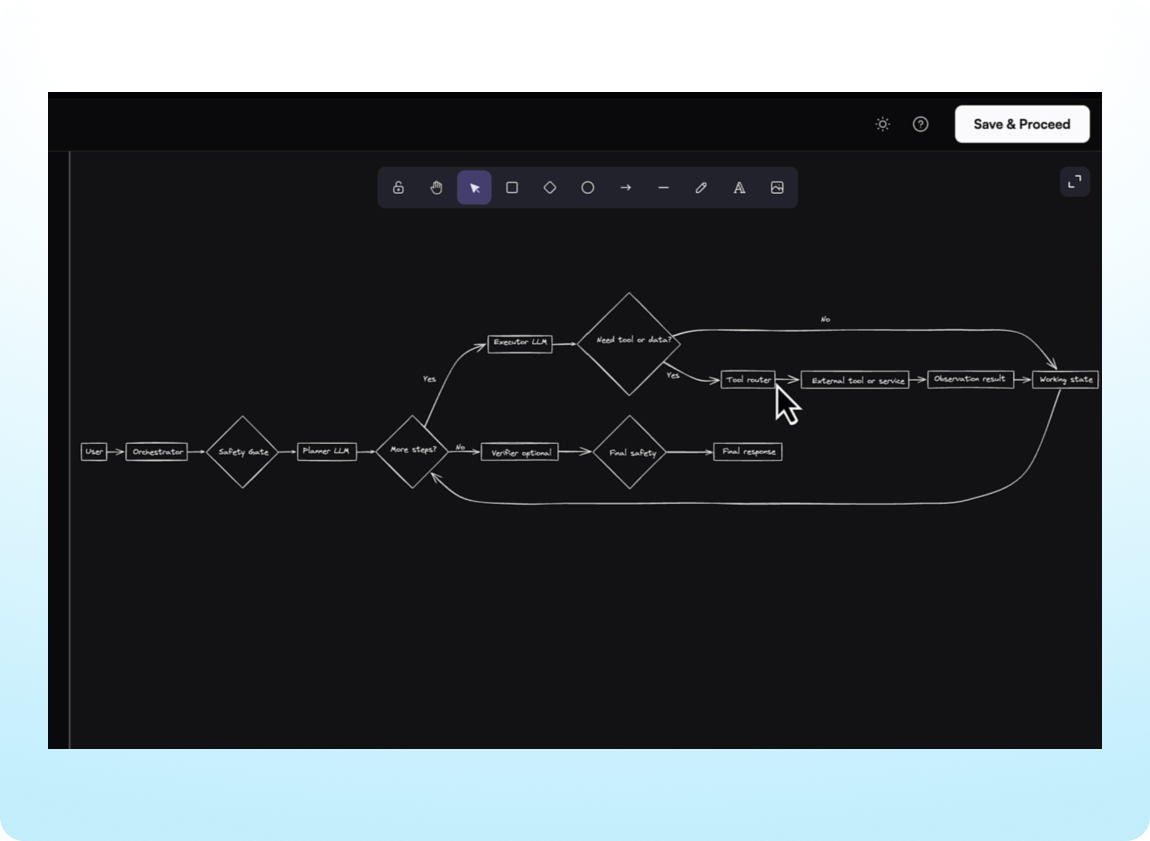

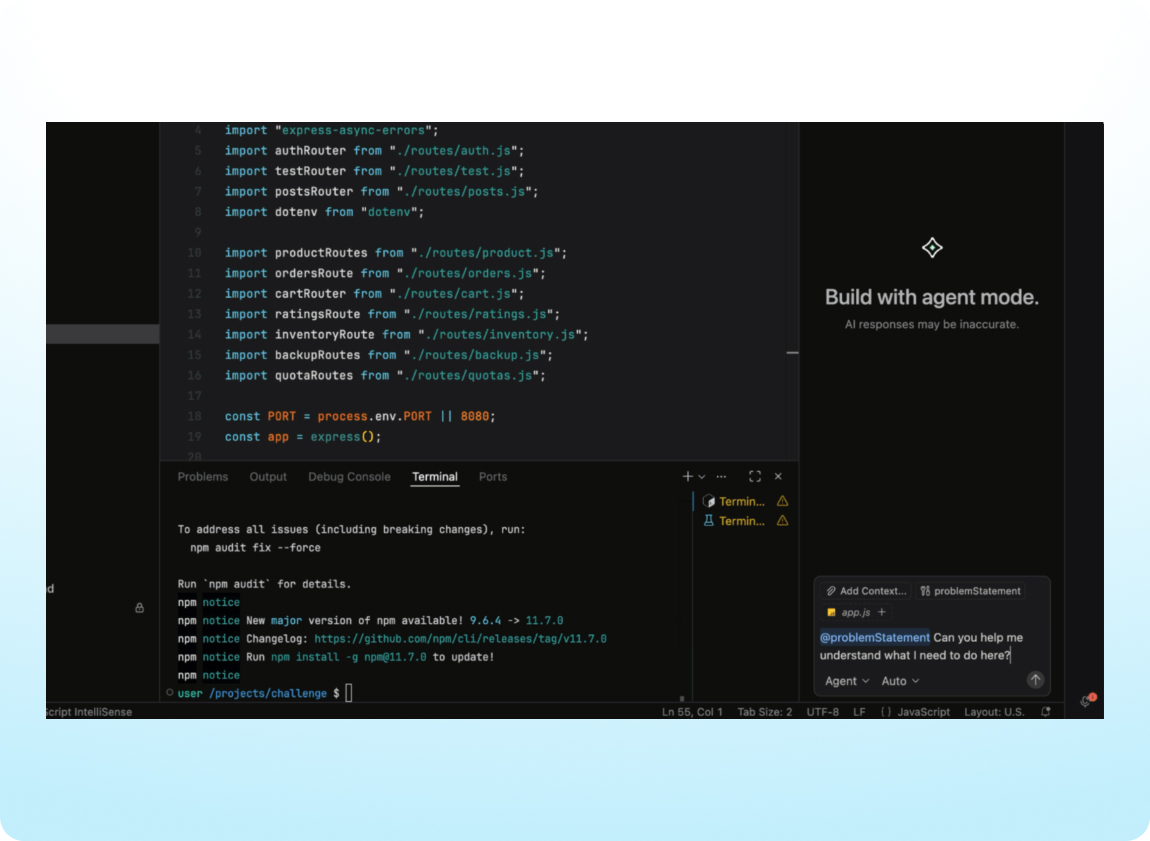

Evaluate AI-Assisted Coding in Interviews (AI Add-on)

Get candidates to demonstrate real-world collaboration with AI on coding questions, in addition to already supported Project and Code Repo question types. The AI assistant will answer clarifying questions and offer contextual hints, giving interviewers insight into how candidates reason, make decisions, and problem-solve.

Track AI Usage During Interviews (AI Add-on)

Get a clear view of a candidate’s thinking, iteration, and use of AI during interviews with Observation Mode. Interviewers can see all AI Assistant interactions in real time, making it easier to understand a candidate’s approach.

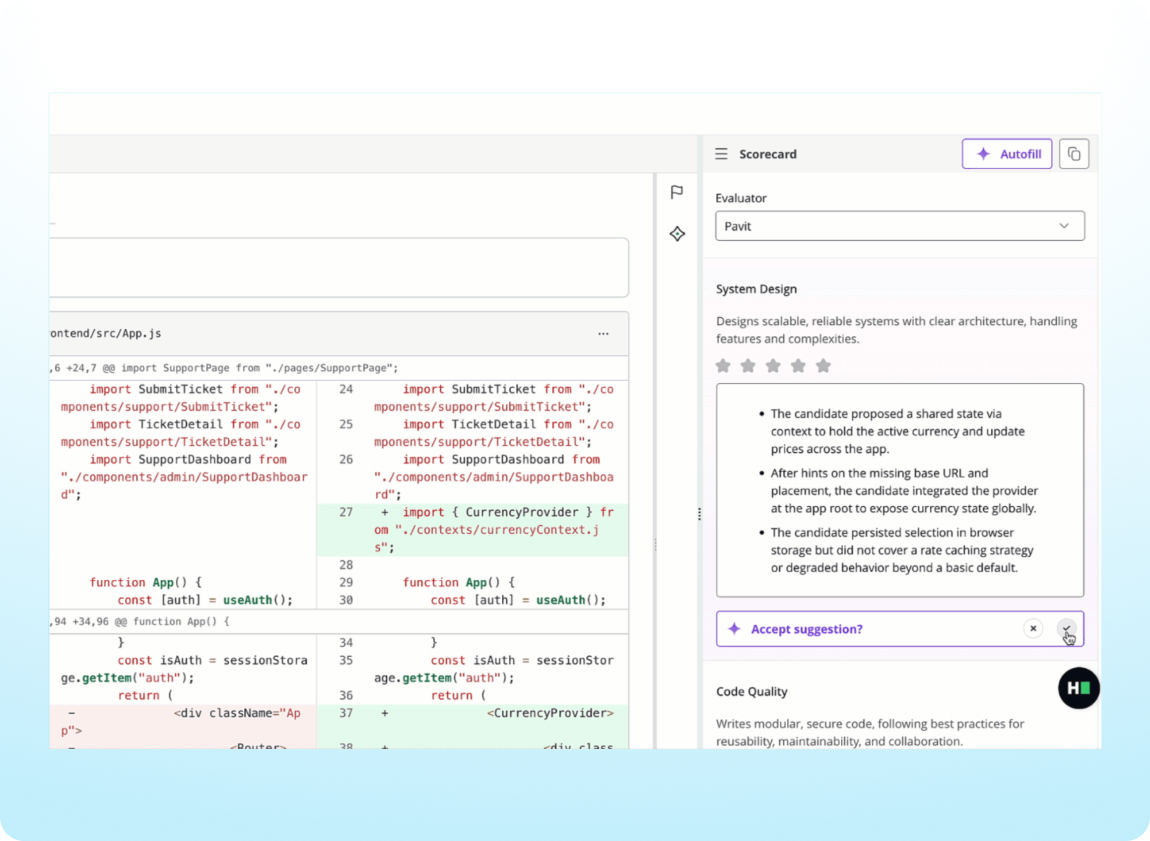

Spend Less Time on Interview Feedback (AI Add-On)

Complete interview scorecards faster with auto-populated summaries based on the candidate’s performance and interview transcript. Scorecard Assist is now available for interviews with project and code repository questions and helps you quickly deliver consistent, high-quality feedback across interviews.

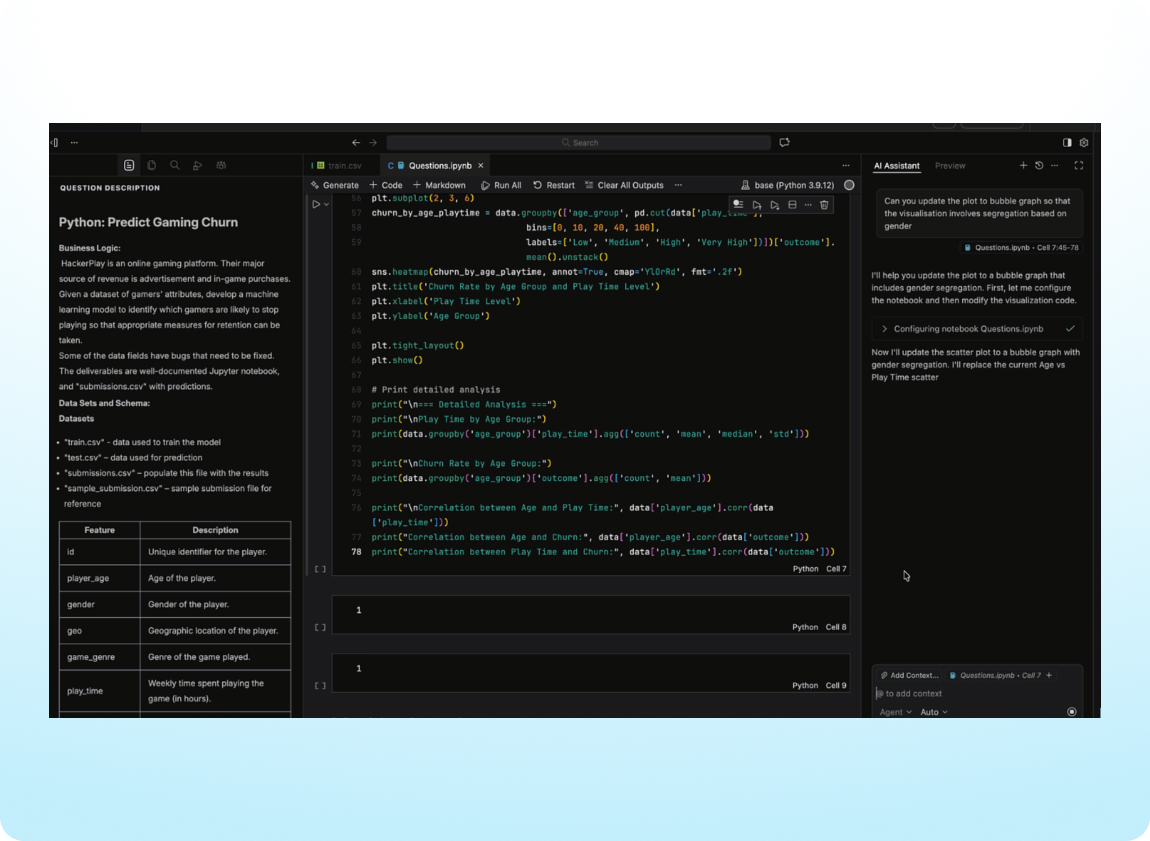

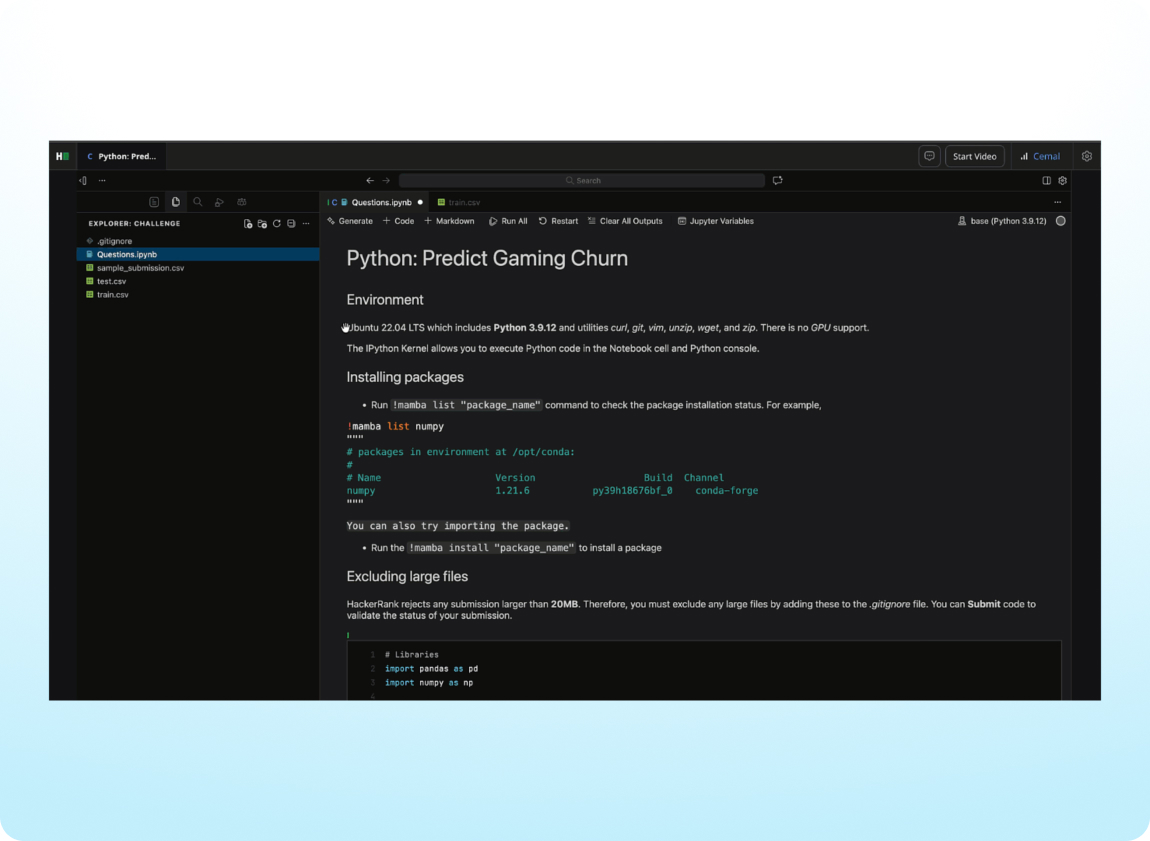

Assess How Data Science Candidates Use AI

Interviewers can see how data science candidates use AI in real time to explore data, debug code, and build solutions, revealing their problem-solving approach, judgment, and practical AI skills. AI Assistant capabilities are now available for data science questions in interviews with VS Code, supporting chat and agent modes within notebooks.

Integrity

Detect Suspicious Objects in Webcam Feeds (AI Add-on)

Protect the integrity of assessments by automatically identifying the use of unauthorized objects during proctored tests. When Proctor Mode is enabled for tests, suspicious objects such as mobile phones and tablets are detected in the candidate’s webcam feed and surfaced for review alongside the existing integrity signals.

Identify Unauthorized Collaboration (AI Add-on)

Identify behavior that may indicate outside assistance. Deleted code is now analyzed during assessments to detect chat-like activity patterns, such as repeatedly typing and deleting messages. These patterns can suggest screen-sharing or other forms of unauthorized collaboration and are flagged for review alongside the existing integrity signals.

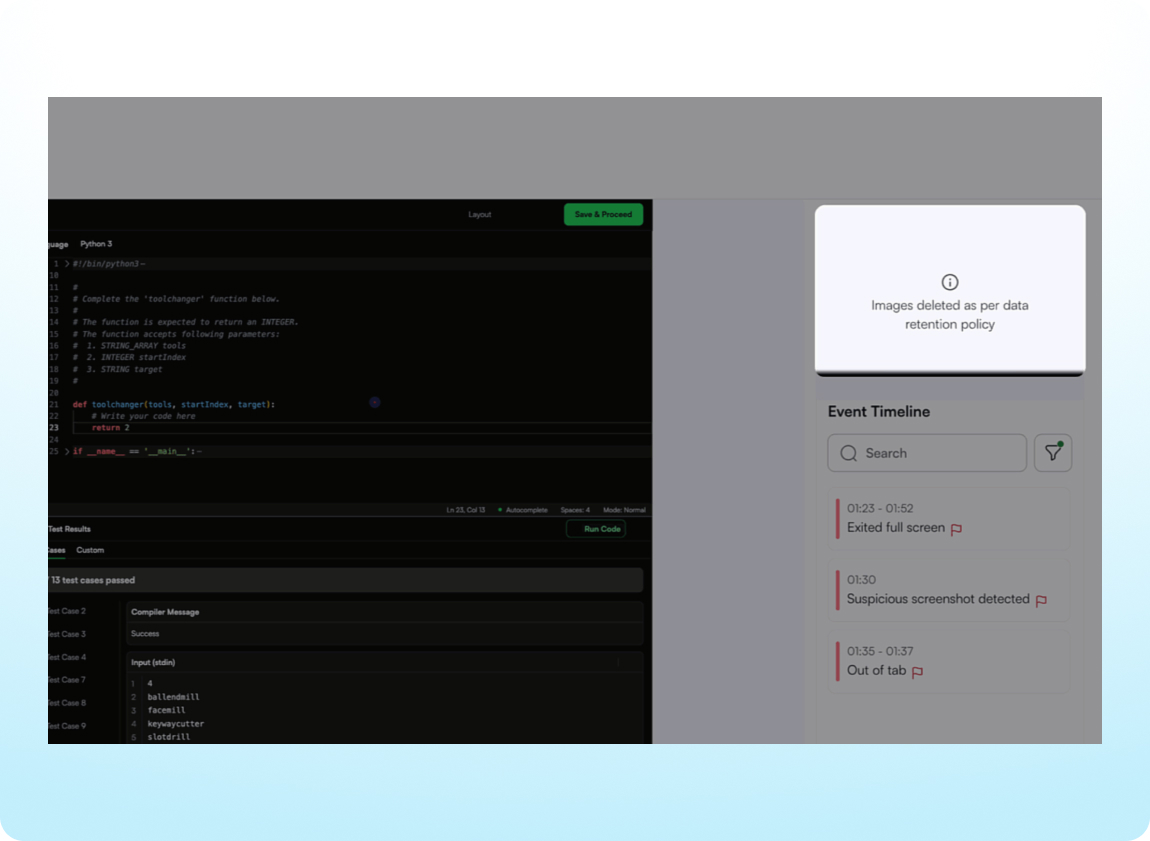

Set a Company-Wide Image Retention Policy

Meet company-level privacy and compliance requirements by specifying how long candidate images are stored. Choose a 30, 45, or 90-day retention window and images older than the defined window will be automatically deleted with notifications and reporting updates provided for transparency.

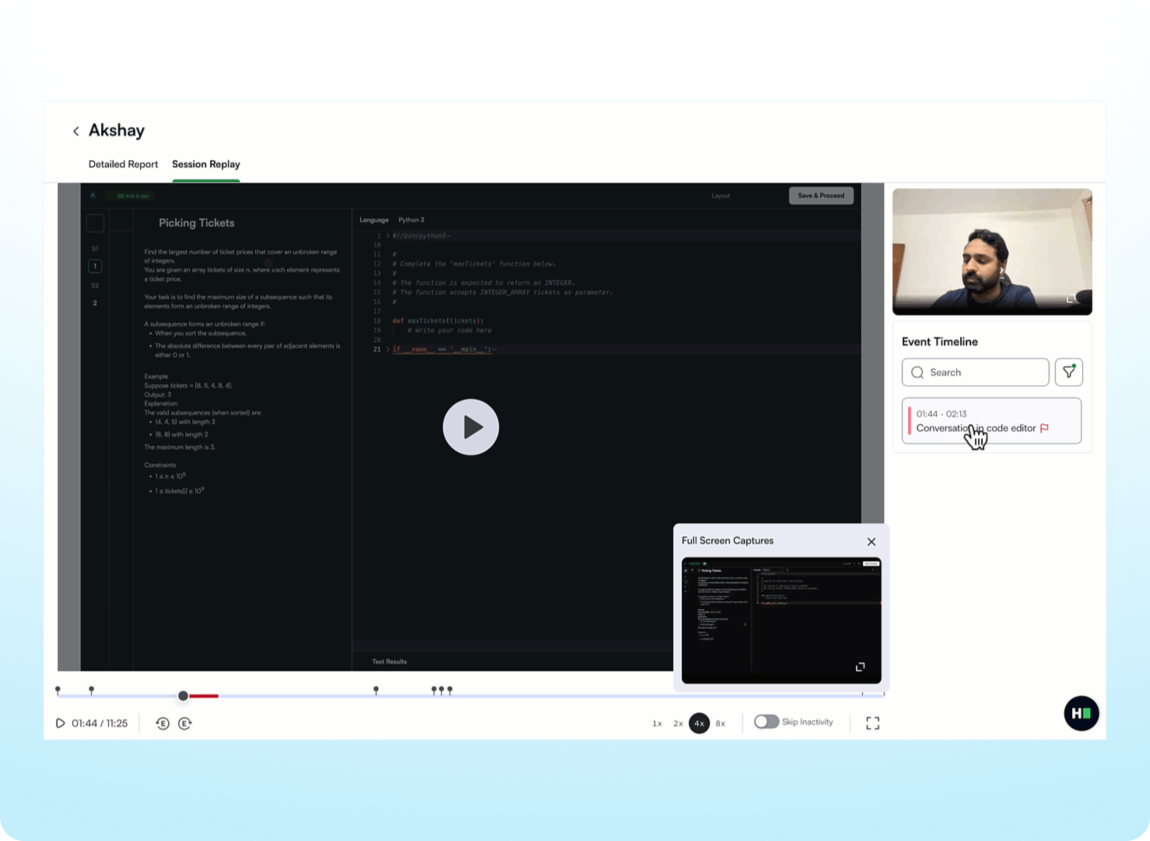

Validate Integrity Flags with Supporting Evidence

Integrity signals are now easier to review and assess. In the Summary Report, you can quickly understand what behavior was detected, when it occurred, and the evidence behind it, all in one place. Investigate potential issues faster using screenshot and image previews, tab and fullscreen activity with timestamps, code similarity comparisons, and direct access to session replays.

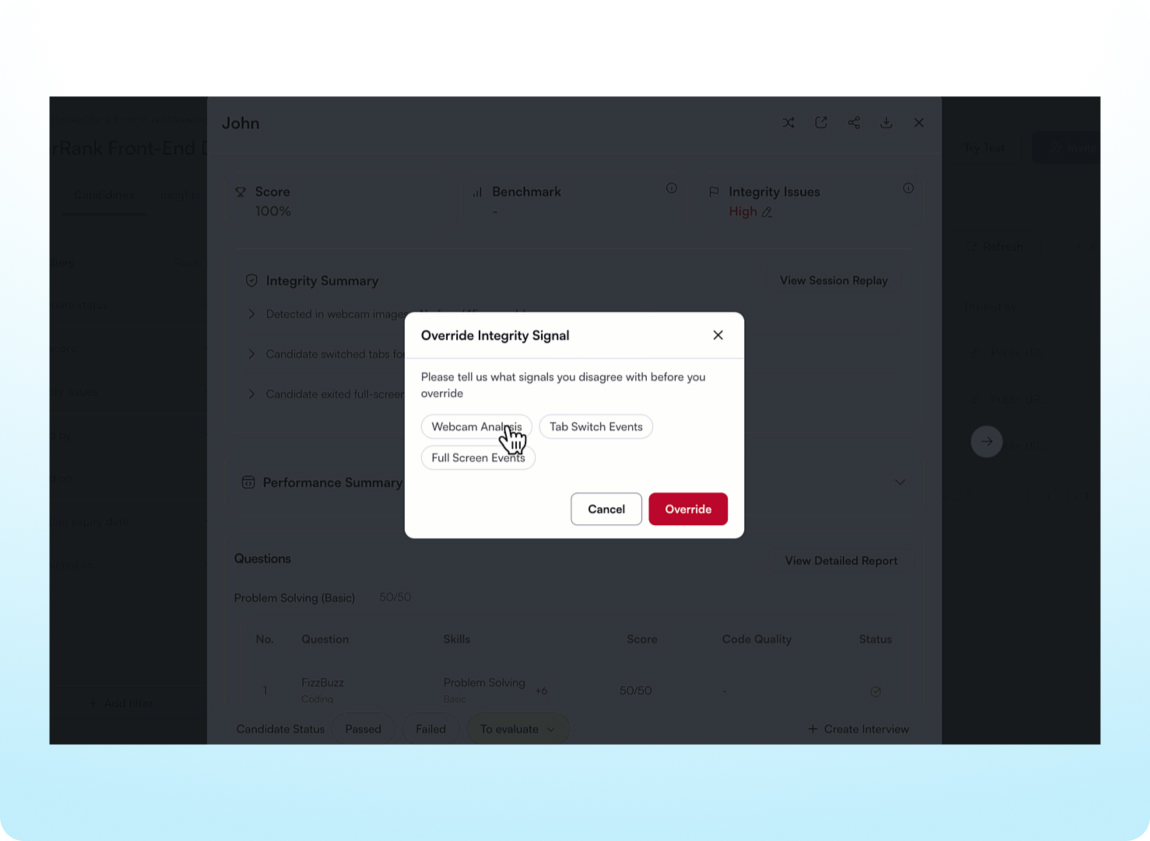

Take Action on Integrity Signals in Test Reports

Manage integrity flags directly in the Summary Report. When a flag isn’t warranted, override it and add context for other stakeholders, helping everyone make confident and informed hiring decisions.

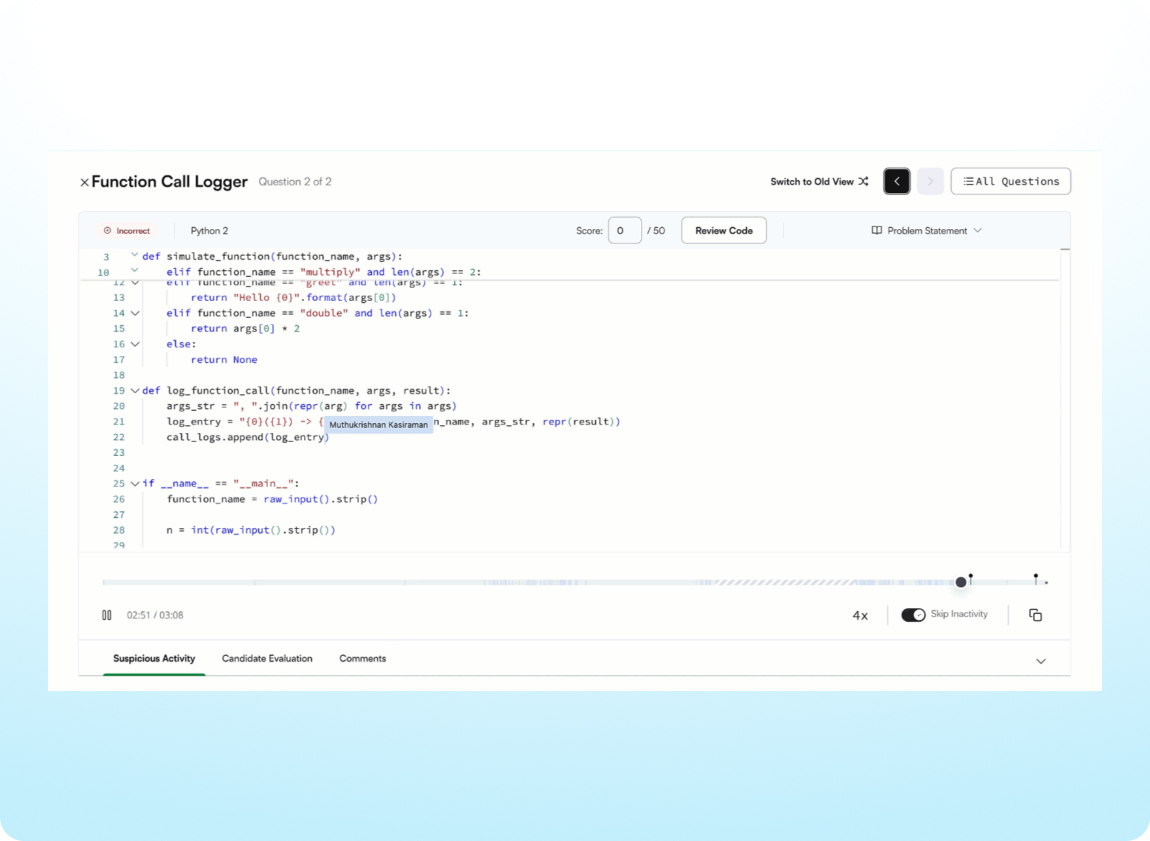

Review Complete Code Playback

Get a complete and dependable record of candidate activity, even when sessions are interrupted by network issues, page refreshes, or accidental tab closures. Improved recovery and monitoring reduce incomplete playbacks, ensure fewer sessions are lost, and clearly surface any impacted sections.

Developer Experience

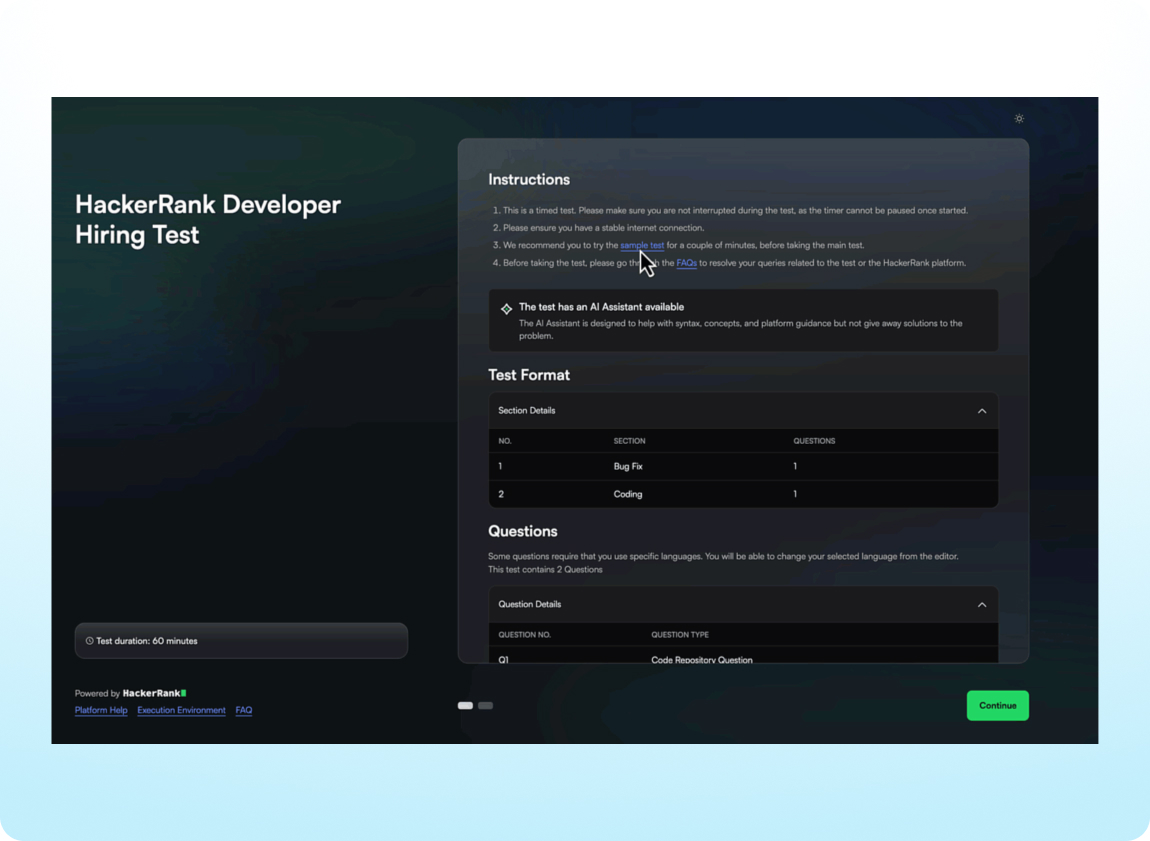

Give Candidates a Modern Test-Taking Experience

The candidate experience has been refreshed with a cleaner interface and simpler onboarding across all question types. Candidates can get started faster with clear instructions available both before login and during the test.

Set Up Code Repository Questions Faster (AI Add-on)

Candidates can now get up and running faster with code repository questions. Code repository installs and builds have been optimized, reducing setup time by about 70%.

Conduct Realistic Data Science Assessments

Data science candidates now complete assessments in Visual Studio Code (VS Code), the same professional coding environment they would use on the job. This update provides a more consistent experience across roles, while still supporting notebook-based work with AI help for exploring data and reviewing code.

Practice with Sample Code Repository Projects (AI Add-on)

Candidates can prepare for a live assessment by exploring a similar multi-file, code repository–based environment to get an understanding of expectations ahead of time. Every test that includes a Code Repository question now comes with a paired sample project, reducing surprises and allowing candidates to feel confident during the real assessment.

Experience a Smoother AI-Assisted IDE (AI Add-on)

Work with an improved AI Assistant experience that loads faster and supports smoother checkpoint restores to quickly return to a previous state when needed. In interviews, candidates can now choose when to share the problem statement with the AI Assistant, making it easier to assess task understanding and how effectively AI is used.

Integrations

Easily Connect Your Recruiting Tools

.png)

Integrate HackerRank with more of the tools you already use, including Asana, Microsoft Teams, and Notion. Our new Zapier integration lets you automatically share HackerRank updates, such as test completions and interview feedback, in your favorite tools without any engineering help.

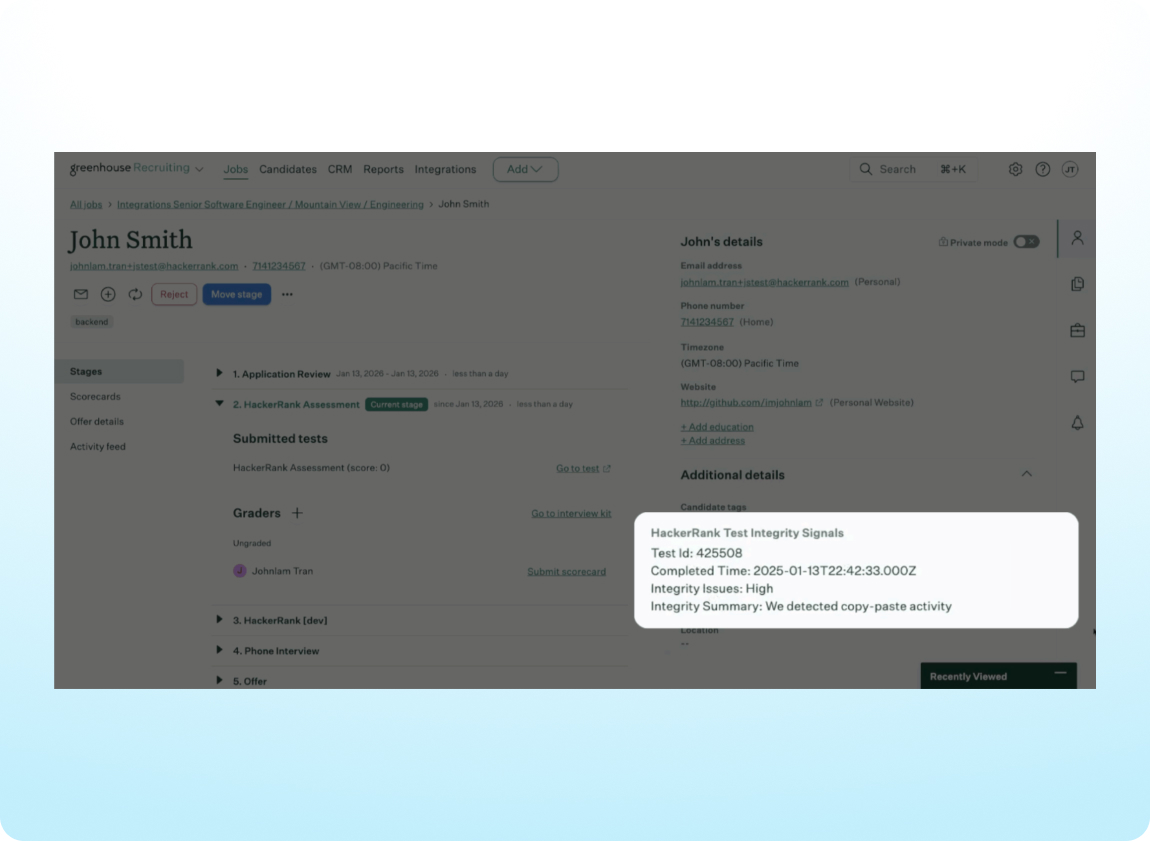

View Integrity Signals Directly in Your ATS

Get a consistent snapshot of candidate integrity in your Greenhouse, iCIMs, or Workday ATS through two synced data points: an Integrity Status (None, Medium, or High) and an Integrity Summary highlighting flagged behaviors such as copy and paste activity. Full integrity details and deeper context remain available in the HackerRank assessment report.

Engage

SkillUp

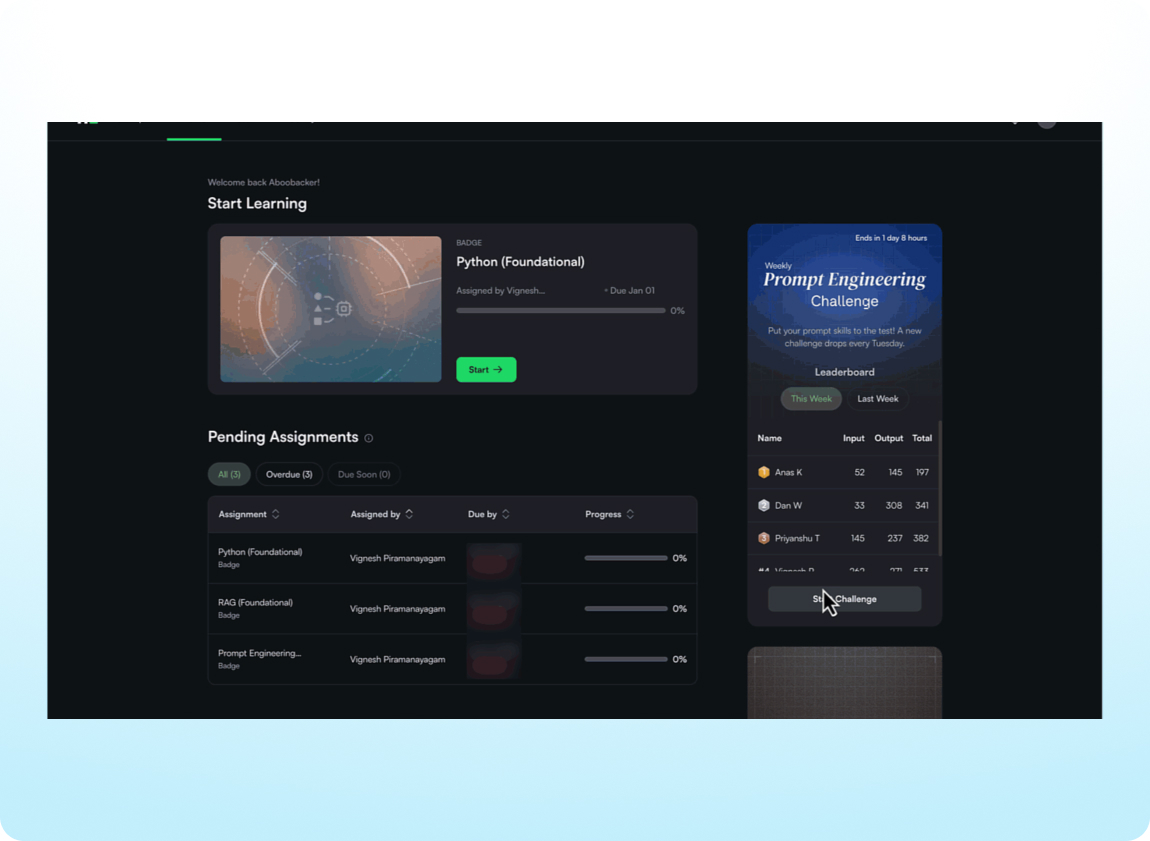

Build AI Skills with a Weekly Prompt Engineering Challenge

Give your team a fun way to hone their AI skills over time. Each week in SkillUp, developers can practice writing prompts to solve real problems and earn a spot on an org-wide leaderboard. Help them stay current with some friendly competition and track engagement and participation across the team.

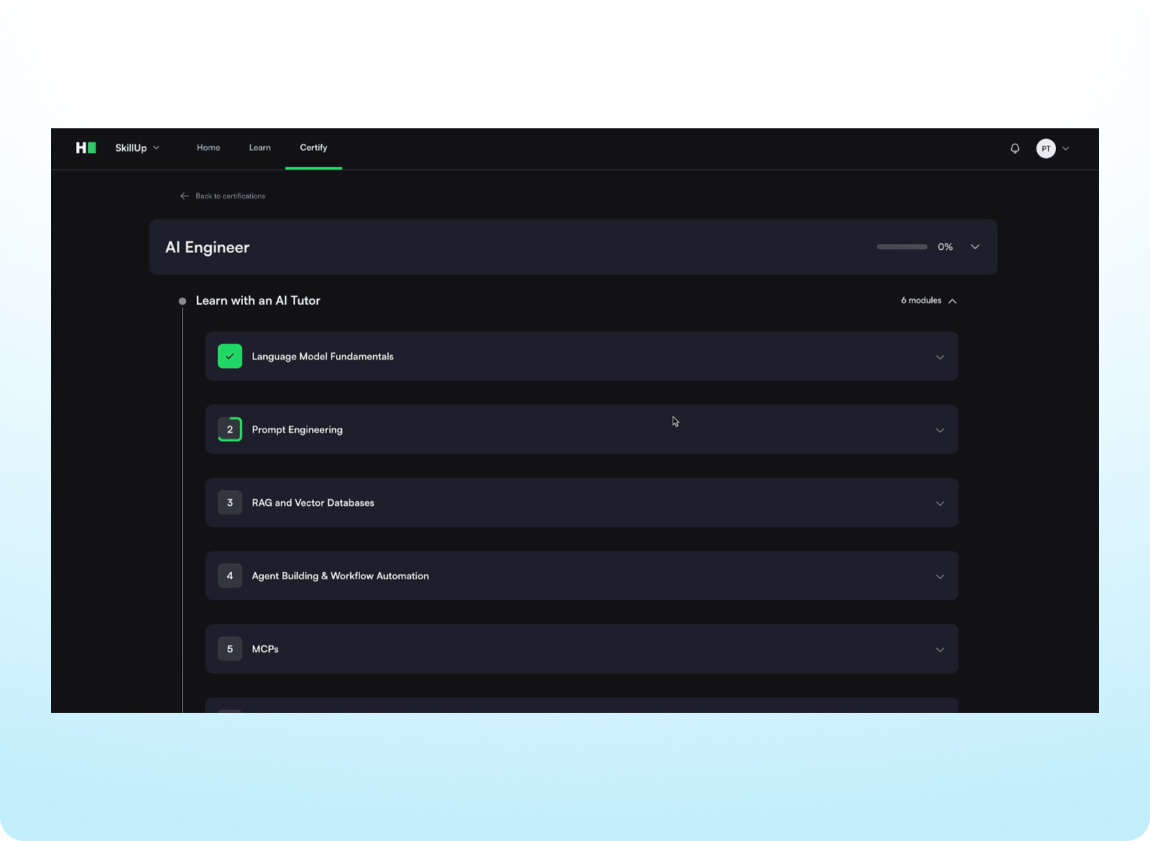

Become a Certified AI Engineer

Build expertise in creating AI-powered applications and develop next-gen developer skills. The AI Engineer Certification is now available in SkillUp with guided lessons and hands-on challenges to develop practical expertise in using AI to work more effectively.

Developer Community

Platform

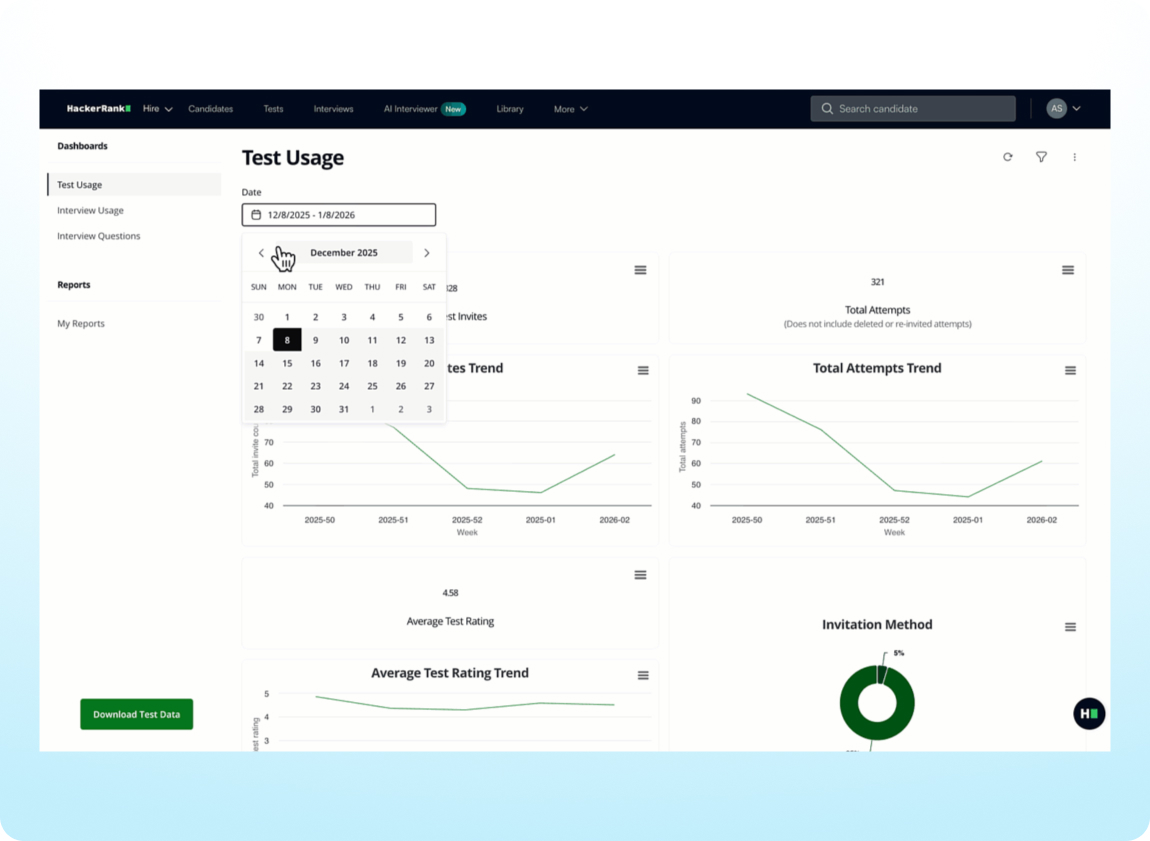

Explore Insights Dashboards and Exports Faster

Insights dashboards and exports now load significantly faster across the platform. Reports that previously took 15 seconds or more can now load in as little as 3 seconds, helping teams explore data quickly and spend less time waiting on routine reporting.

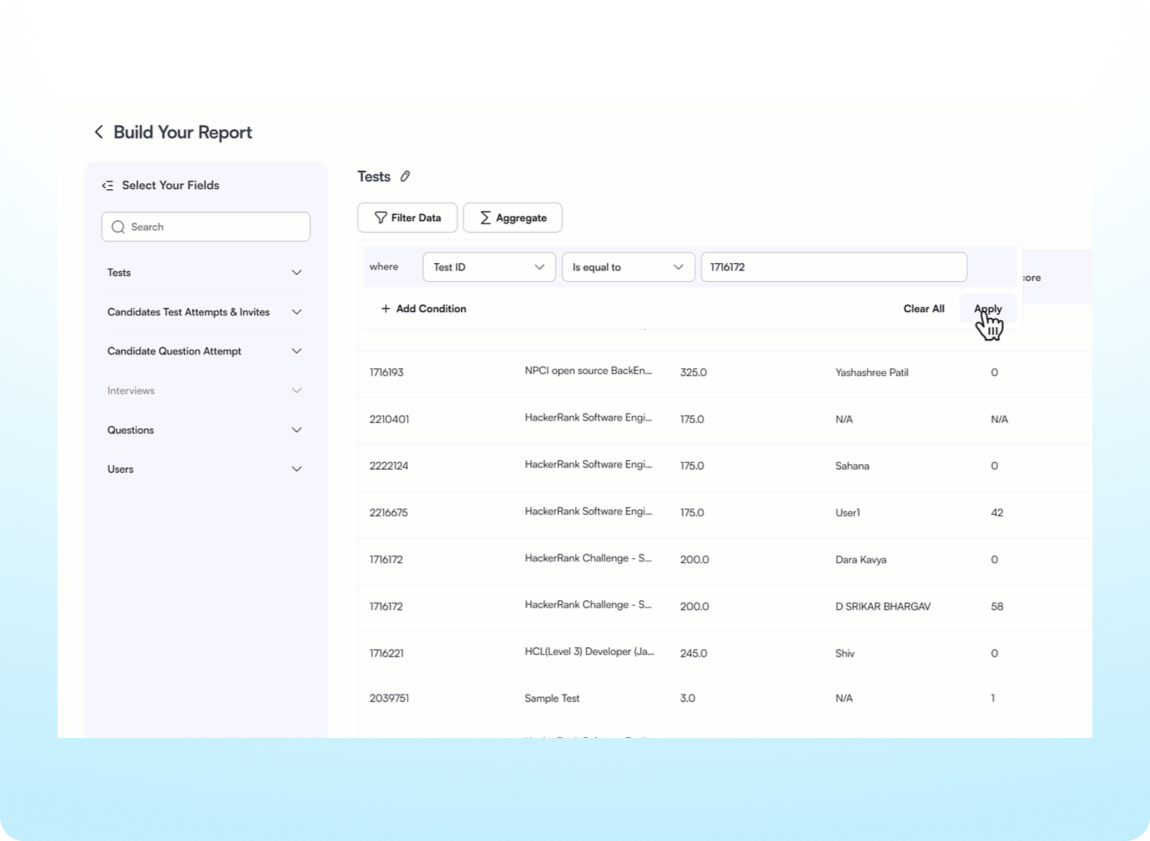

Filter and Segment Custom Reports

Custom Reports let you access and analyze your HackerRank data on demand. With advanced filtering, you can now drill into specific data segments, explore results in greater detail, and focus on the exact data you need when building and sharing reports.

Assess Candidates with More Job-Relevant Questions

.png)

Find hundreds of new and refreshed questions that reflect role-specific tasks in the HackerRank Library. Assess candidates more accurately with real-world projects and code repository challenges, updated technologies, and clearer problem statements.

Platform Enhancements

Skills Platform

Library Enhancements

Admin

Data & Insights

Explore new Jan'26 release materials

Register now to watch the latest insights from our product experts